The Great Unlock

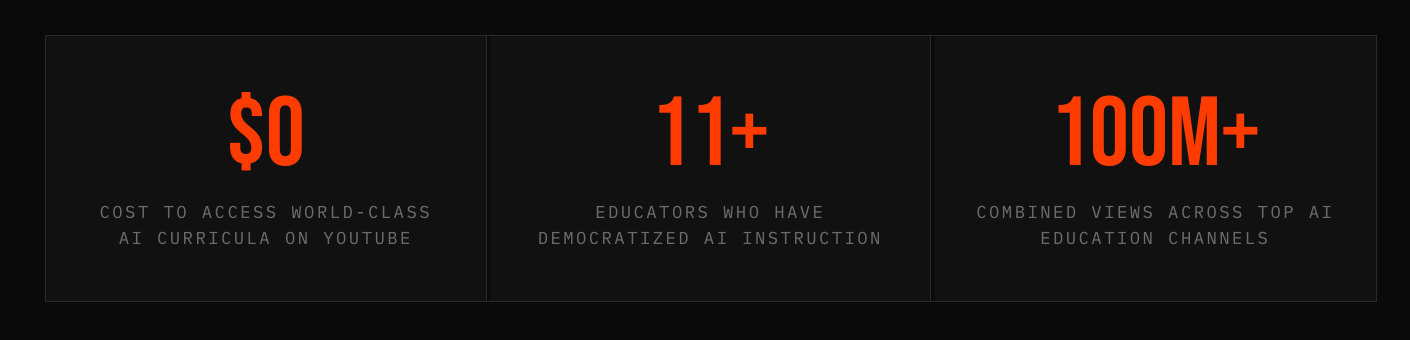

How a handful of YouTube channels and one very stubborn idea — that world-class AI education should cost nothing — are dismantling the last credentialist gatekeepers in tech.

There is a moment in one of Andrej Karpathy's YouTube videos — somewhere around the ninety-minute mark, deep inside a lecture on backpropagation through time — where the former Tesla AI director pauses, looks directly into his webcam, and says something that no tenured professor would dare admit in a formal classroom: "I'll be honest, I had to re-derive this myself last week." The audience for this confession isn't a seminar room of twenty PhD candidates. It's 1.4 million subscribers.

That moment, small and throwaway as it sounds, says everything about what has happened to AI education in the last five years. The field's most brilliant practitioners have stopped waiting for institutions to create curricula. They've opened browser tabs, pointed cameras at whiteboards, and started talking. The result is the most radical democratization of technical knowledge since the invention of the printing press — and it's happening on a platform best known for cat videos and prank compilations.

We are living through what might be called the Great Unlock: a period in which the intellectual infrastructure of artificial intelligence — once hermetically sealed behind university tuition, corporate NDAs, and the invisible velvet rope of pedigree — has become nearly free and universally accessible. The gatekeepers did not give up gracefully. They were simply routed.

The Accidental Curriculum

Nobody planned this. There was no Ministry of AI Education that decided the world needed free alternatives to Stanford's tuition bills. What happened instead was messier, more organic, and ultimately more durable: a loose collection of researchers, statisticians, and engineers independently concluded that the best use of their platform was to teach.

The oldest and perhaps most foundational thread in this story runs through 3Blue1Brown, the channel built by Grant Sanderson. Before Sanderson came along, the standard approach to explaining neural networks involved either incomprehensible academic notation or hand-wavy analogies that obscured more than they revealed. Sanderson did something deceptively simple: he made the math visible. Using a custom animation engine he built himself, he rendered the abstract geometry of gradient descent into something you could actually watch unfold in real time. His "Neural Networks" series has become the universal entry point — the thing educators across the internet tell beginners to watch first, before code, before papers, before anything else.

The visual intuition Sanderson provides acts as a kind of cognitive scaffold. Students who would have bounced off a textbook's notation find themselves, thirty minutes later, genuinely understanding what a sigmoid function is doing and why it matters. It is, in the truest sense of the word, a pedagogical breakthrough — and it lives on YouTube, sandwiched between advertisements for mattresses and meal kits.

The field's most brilliant practitioners have stopped waiting for institutions. They opened browser tabs, pointed cameras at whiteboards, and started talking to the world.

From Sanderson's visual foundation, the natural next step has historically been Stanford CS229 — Andrew Ng's legendary machine learning course, which Stanford began releasing openly online years before MOOCs became fashionable. Ng, who co-founded Coursera and ran Google Brain, is arguably the most effective AI educator alive. His gift is not brilliance — though he has that too — but patience. He possesses an almost preternatural ability to sense where a learner is likely to get lost and to preemptively explain his way around the obstacle. CS229 gave the world its first real taste of university-grade AI instruction at no cost, and its influence on the current generation of practitioners is difficult to overstate.

Learning Top-Down

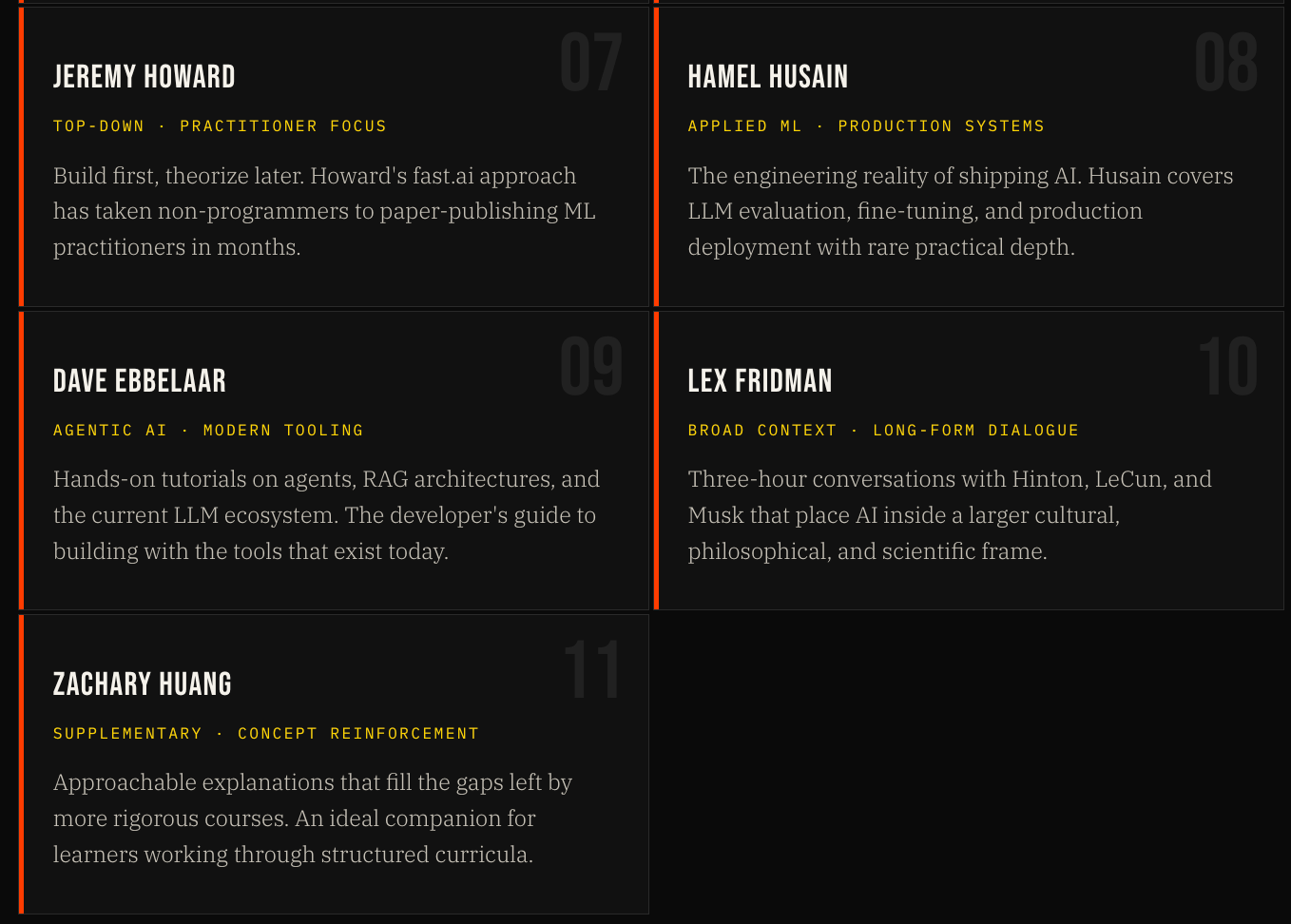

Jeremy Howard is a heretic, and he is proud of it. As the co-founder of fast.ai, he has spent years arguing against the conventional wisdom that you must master statistics, then linear algebra, then calculus, then coding, before you are permitted to touch a neural network. His counter-proposal: start with a working model on day one. Get something running. Get the feeling of it. Then, and only then, start peeling back the layers to understand what's underneath.

His YouTube channel and associated courses embody this philosophy completely. In Howard's world, a beginner's first session ends with a trained image classifier, not a set of solved differential equations. This approach has proven transformative for a specific and crucial demographic: smart people who lack formal technical backgrounds but possess the motivation to learn. Lawyers, doctors, biologists, journalists — people who need AI tools but have neither the time nor the inclination to complete a four-year computer science degree — have found in Howard's method a viable path that actually leads somewhere.

The fast.ai community that has grown up around his channel is, by some measures, one of the most remarkable self-organizing educational ecosystems on the internet. Alumni have published peer-reviewed papers, built production AI systems, and won international machine learning competitions — some within months of watching their first video.

The Statistics Floor

One of the least glamorous and most essential figures in this ecosystem is Josh Starmer of StatQuest. In a field dazzled by its own frontier — transformers, diffusion models, chain-of-thought prompting — Starmer has spent years doing the unglamorous work of explaining the statistical machinery that underlies everything. His explanations of concepts like principal component analysis, regularization, and ensemble methods are, by consensus of the ML community, the clearest available anywhere at any price.

What Starmer understood, and what many YouTube educators underestimate, is that most people fail to learn machine learning not because they can't grasp deep learning concepts, but because their statistical foundation is full of holes. StatQuest patches those holes, one joyfully illustrated video at a time. Serrano Academy, run by Luis Serrano, occupies a similar but distinct role — where Starmer is your statistical mechanic, Serrano is your conceptual guide. Together, they form something like a complete lower-division math curriculum: free, navigable on a phone, and designed by practitioners rather than accreditation committees.

Most people fail at machine learning not because they can't grasp deep learning, but because their statistical foundation has holes. StatQuest patches them, one illustrated video at a time.

From Theory to Production

There is a famous, frustrating gap in traditional AI education. A student can complete a rigorous university ML course, implement a dozen algorithms from scratch, and still have no idea how to actually deploy a model that real users depend on. This is the gap that Hamel Husain and Dave Ebbelaar have stepped into.

Husain, a former GitHub engineer with deep roots in the ML tooling ecosystem, has built a following among the specific audience that needs him most: software engineers who have been asked by their companies to "do something with AI" and are trying to figure out what that actually means. His content on LLM evaluation — how you measure whether a language model is actually doing what you need it to do, in your specific production context — addresses a problem that no textbook has yet solved, because the problem is too new. Ebbelaar works adjacent territory: the agentic systems, retrieval-augmented generation architectures, and orchestration patterns that have become the dominant paradigm for building LLM-powered applications.

The Frontier in Real Time

Machine Learning Street Talk occupies a different ecological niche than every other channel discussed here. Where most AI education channels are trying to move learners forward from where they are, MLST is trying to take people who already know the field and force them to confront what they don't yet understand. Its hosts conduct long, technically rigorous interviews with the researchers actually publishing the papers that matter — the people arguing about whether scaling laws will hold, whether current alignment approaches are adequate, where the wall is.

For intermediate-to-advanced practitioners, MLST serves a function that no university course can provide: it keeps you calibrated to the actual frontier rather than the version of the frontier that gets written up in textbooks three years later. And then there is Lex Fridman. His multi-hour conversations with Geoffrey Hinton, Yann LeCun, Sam Altman, and dozens of other central figures are less curricula than primary sources — documents of a field in the act of arguing with itself about what it is, what it's doing, and what it might become.

What Democratization Actually Means

Before this ecosystem existed, learning AI at a serious level required one of three things: enrollment at a research university with a strong CS program, employment at a company that paid for professional development, or the rare combination of extraordinary self-discipline and access to the right papers and books. All three pathways were heavily filtered by geography, socioeconomic background, and existing credential. A self-taught programmer in Nairobi or Manila or rural Ohio had no realistic path to the kind of AI education available to a Stanford or MIT student.

That has changed. The curriculum described in this article is available to anyone with an internet connection. It is better than what most universities were teaching five years ago. It is kept current by people who are actively doing the research. And it costs nothing except time. The skills taught by these eleven channels — machine learning, deep learning, statistical reasoning, LLM evaluation and deployment — are among the most economically valuable on earth right now. YouTube doesn't care where you went to school.

The credential is not dead. But it has been joined by something more honest: demonstrated competence, built in public, visible to anyone who cares to look. — The new currency of technical hiring

The Credential Question

There is an obvious objection to everything described here, and it is worth taking seriously: none of it grants a credential. You can watch every video Andrej Karpathy has ever posted and emerge without a degree, a certificate, or any piece of paper that a university HR department will recognize. In a labor market still organized around credentials, this matters.

But there are signs — imperfect, uneven, but real — that the credential is being supplemented by a different currency. GitHub repositories. Kaggle rankings. Published projects. Demonstrated competence, built in public and visible to anyone who cares to look. The hiring practices of the most forward-thinking AI companies have begun to reflect this shift. Anthropic, OpenAI, Hugging Face, and dozens of well-funded AI startups routinely hire engineers whose most impressive credential is a GitHub profile and a track record of results.

The last gatekeepers are falling. They are falling because a handful of people — a former Tesla executive, a mathematical animator, a statistics professor who makes his own jingles, a fast.ai heretic, and several others — decided that what they knew was too important to keep inside a paywall. They were right. And the effects of that decision are only beginning to be felt.

The gate is open. It has been open for years. The only question now is who walks through it.

Your Watchlist — All 11 Channels

Every resource referenced in this article is free, available right now, and linked below. Bookmark this and return as you progress — the channels you skip in week one will become essential by month three.

CORRECTIONS & METHODOLOGY — Channel subscriber counts and view figures cited in this article reflect public data as of publication. Learning pathway recommendations represent editorial judgment informed by community consensus. No commercial relationships exist between the author and any YouTube channels referenced in this story.